What is Algomax?

Algomax is a purpose-built AI evaluation platform engineered for teams building, refining, and deploying Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) systems. Unlike generic benchmarking tools, Algomax delivers nuanced, human-aligned assessments—measuring not just accuracy or latency, but coherence, factual grounding, instruction adherence, and contextual relevance. It transforms subjective model behavior into actionable, interpretable signals—accelerating iteration cycles while deepening trust in production-ready AI.

How to use Algomax?

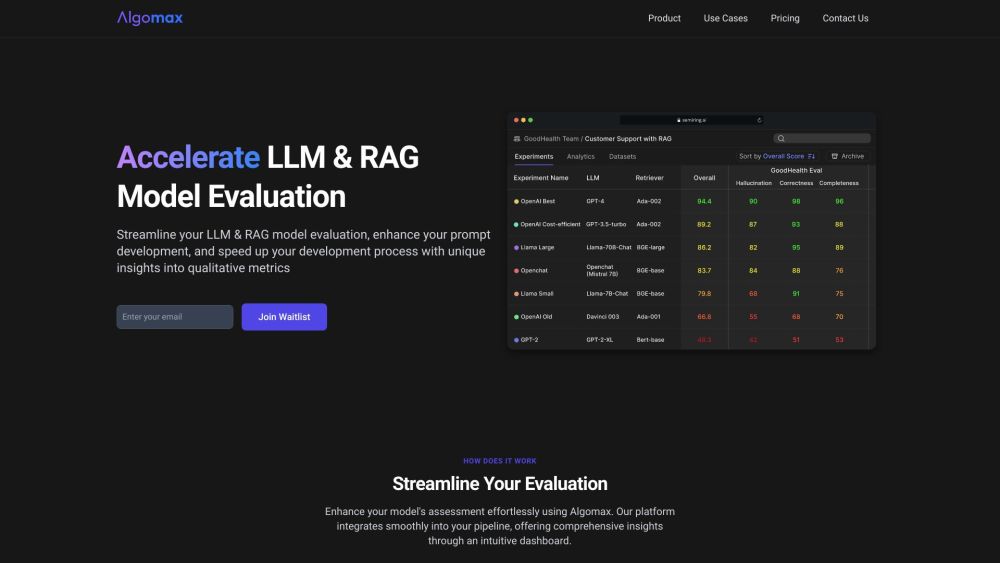

Getting started with Algomax takes minutes: connect your LLM or RAG endpoint via API, define evaluation scenarios (e.g., QA fidelity, hallucination resistance, or prompt robustness), and launch automated test suites. The unified dashboard surfaces real-time performance trends, side-by-side model comparisons, and qualitative breakdowns—highlighting *why* a response succeeded or failed. Whether you're validating fine-tuned models, stress-testing retrieval pipelines, or optimizing prompts across domains, Algomax adapts seamlessly to your workflow—no code rewrites required.